Tess Frazier is the Chief Compliance Officer at Class. She’s built her career in education technology and believes a strong compliance, data privacy, and security program benefits everyone.

Tess Frazier is the Chief Compliance Officer at Class. She’s built her career in education technology and believes a strong compliance, data privacy, and security program benefits everyone.

Artificial Intelligence (AI) continues to play a growing role in our lives. It helps us find the quickest way home, recommends music and TV programs, and powers voice assistants. AI is driving useful functionality in education technology. As AI continues to evolve, it has the potential to unlock transformative innovation in education that will benefit Class customers.

Every new and powerful technology comes with risk. This is true for AI as well. Harmful bias, inaccurate output, lack of transparency and accountability, and AI not fully aligned with human values are just some of the risks that need to be managed to allow for the safe and responsible use of AI. At Class, we understand that we are responsible for managing these risks and for helping our customers manage the risks.

The lawful, ethical, and responsible use of AI is a key priority for Class. While sophisticated generative AI tools have brought more focus on AI risks, these risks are not new. In 2023 we established a cross-functional and diverse working group (the Class AI Task Force) to develop and implement a dedicated Trust Centered AI program. Also, in 2023 we formally implemented the program (see below), led by our Chief Compliance Officer and Chief Information Technology Officer working closely with our Chief Product and Chief Technology Officers.

Our AI program is aligned with the NIST AI Risk Management Framework and the EU AI Act. Our program builds on and integrates with our SOC2-certified privacy and security risk management programs and processes. AI will continue to evolve rapidly, and at Class, we are committed to the continuous enhancement of our AI program. This will ensure we and our customers can use Class AI-powered product features safely and responsibly.

As part of our Trust Centered AI program, we are implementing the following principles. These principles are based on and aligned with the principles of the NIST AI Risk Management Framework, the EU AI Strategy, and the OECD AI Principles. The principles apply to both our internal use of AI as well as to AI functionalities we are developing in products we will provide to our clients.

If you have any questions regarding our AI program, please contact us at privacy@class.com.

Tess Frazier is the Chief Compliance Officer at Class. She’s built her career in education technology and believes a strong compliance, data privacy, and security program benefits everyone.

Tess Frazier is the Chief Compliance Officer at Class. She’s built her career in education technology and believes a strong compliance, data privacy, and security program benefits everyone.

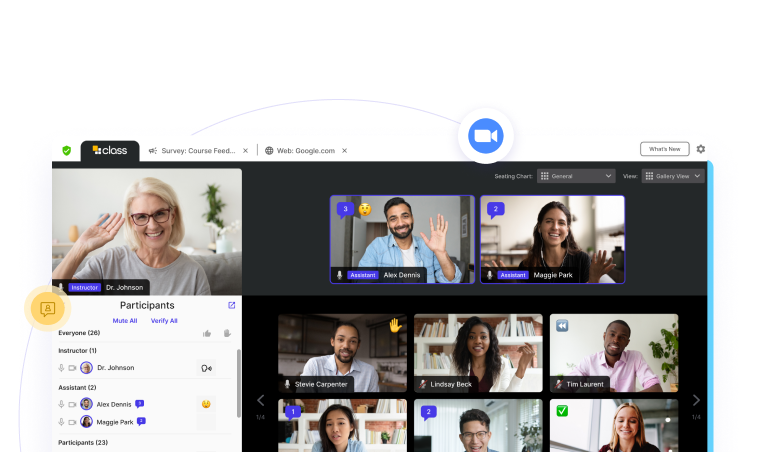

Sign up for a product demo today to learn how Class’s virtual classroom powers digital transformation at your organization.

Features

Products

Integrations